The capabilities of quantum computers are currently under discussion in contexts ranging from banks to merchant ships. This technology, which is still in the pipeline, has been taken further – or rather, lower. More precisely, a hundred meters below the French-Swiss border, where the largest machine in the world is located, the Large Hadron Collider (LHC), operated by the European particle physics laboratory, CERN. Faced with technical difficulties in understanding the mountains of data produced by such a massive system, scientists at CERN have just called on the IBM quantum team for help.

This collaboration on the data produced by the LHC could have major implications for the state of our knowledge regarding matter, antimatter, or even dark matter. Especially since the LHC is one of CERN’s most important tools for understanding the fundamental laws that govern the particles and forces that make up the universe.

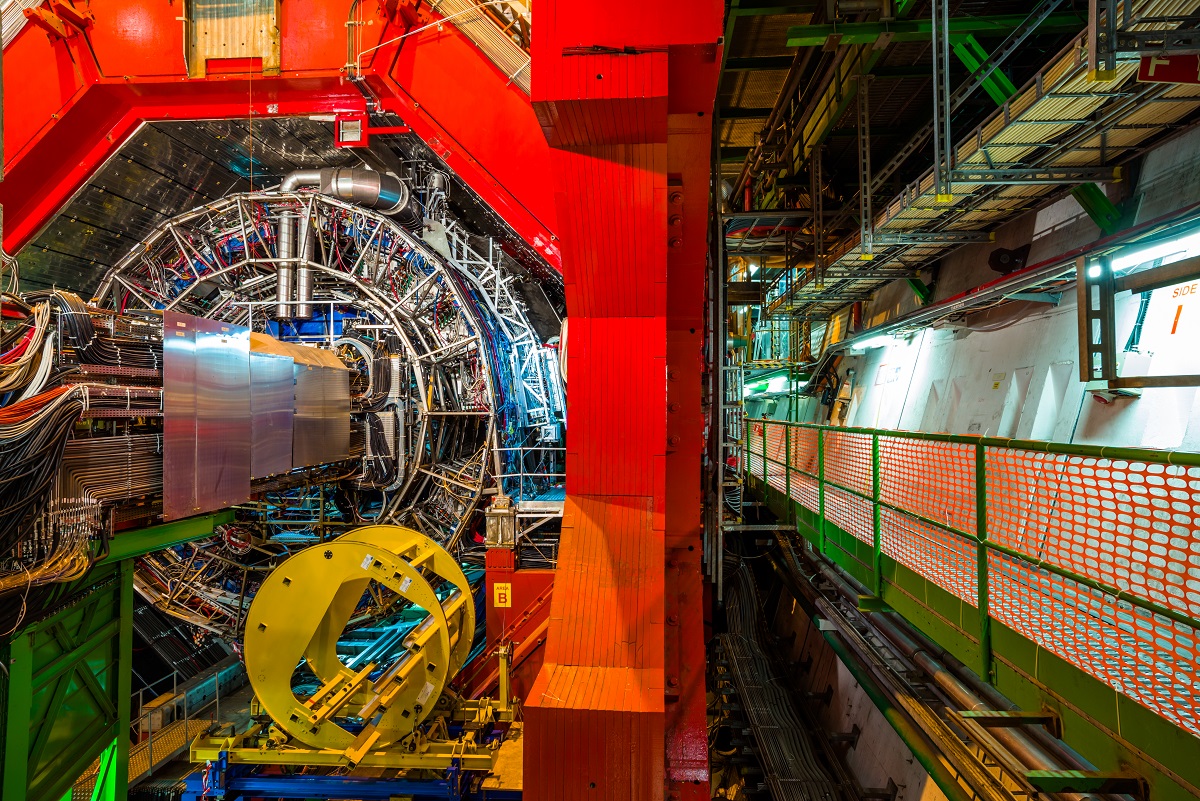

Shaped like a 27-kilometre loop, the system accelerates beams of particles such as protons and electrons to slower-than-light speeds, before causing them to collide in collisions that scientists observe with eight high-resolution detectors housed inside the accelerator. Every second, particles collide about a billion times within the LHC, producing petabytes of data that is currently processed by a million CPUs in 170 locations around the world — a split that can be explained by the fact that not much data can be stored in one place.

The limits of traditional computing

CERN’s job isn’t just to store data, but rather the opposite. All information generated by the LHC is then available to be processed and analyzed, so that scientists can develop hypotheses, evidence and discoveries. Thus, by observing the collision of particles between them, CERN researchers in 2012 discovered the existence of an elementary particle called the Higgs boson, which gives mass to all other fundamental particles. The discovery was then hailed as a major breakthrough in the field of physics.

Scientists do this using sophisticated machine learning algorithms that can scan data produced by the LHC to distinguish useful collisions – such as those that produce the Higgs bosons – from other collisions. “So far, scientists have used classic machine learning techniques to analyze raw data captured by particle detectors, and automatically select the best candidate events,” the IBM researchers explained in a blog post.

This is where Big Blue comes in. “We believe we can greatly improve this selection process — by enhancing quantum computing-based machine learning,” they say. As the volume of data grows, traditional machine learning models are rapidly approaching the limits of their capabilities. This is where quantum computers are likely to play a useful role.

quantitative machine learning

The general-purpose qubits that make up quantum computers can actually contain much more information than conventional qubits, meaning they can visualize and process many more dimensions than conventional devices. Therefore, a quantum computer equipped with a sufficient number of qubits can, in principle, perform very complex calculations that would take centuries for classical computers to solve. With that in mind, CERN partnered with the IBM quantum team in 2018, with the goal of discovering exactly how to apply quantum technologies to advance scientific discovery.

Quantum machine learning soon emerged as a solution to the data-analysis problems burdening CERN teams. The approach is to harness the capabilities of qubits to expand what is known as feature space – the set of features on which an algorithm bases its classification decision. Using a larger feature space, a quantum computer would be able to see patterns and perform classification tasks even in a huge data set, where a classical computer would only see random noise.

Applied to CERN research, a quantum machine learning algorithm can examine the raw data produced by the LHC and identify iterations of the behavior of the Higgs boson, for example, where classical computers would struggle to see anything.

In search of Boson

To assist CERN scientists with their research, the IBM teams have created a quantum algorithm called the Quantum Support Vector Machine (QSVM), designed to identify collisions that produce the Higgs bosons. The algorithm was trained using the test dataset based on information generated by one of the LHC detectors, and performed on both quantum simulators and physical quantum devices.

In both cases, the results were promising. The simulation study, conducted on Google Tensorflow Quantum, IBM Quantum, and Amazon Braket, used up to 20 qubits and a data set of 50,000 events, and performed as well, if not better, than their traditional counterparts for the same problem. The hardware experiment was performed on IBM’s quantum machines using 15 qubits and a 100 event dataset. The results showed that despite the noise affecting the quantum computations, the quality of the classification remained comparable to the best results of classical simulations.

“This once again confirms the potential of the quantum algorithm for this class of problems,” IBM says. “The quality of our results indicates a possible demonstration of a quantum advantage of data classification using quantum vector machines in the near future.” This does not mean, however, that the quantitative advantage has actually been demonstrated. A quantum algorithm developed by IBM produced results similar to those of traditional methods on today’s finite quantum processors – but these systems are still in their infancy.

long struggle

With only a few qubits left, quantum computers today are unable to perform useful calculations. They are also paralyzed by the fragility of qubits, which are very sensitive to environmental changes and still prone to errors. Instead, both IBM and CERN are relying on future improvements in quantum hardware to demonstrate that quantum algorithms have a tangible advantage, not just in theory.

“Our results show that quantum machine-learning algorithms for classifying data can be as accurate as classical algorithms on noisy quantum computers, paving the way for demonstrating a quantum advantage in the near future,” the IBM research team emphasized. Something that gives hope to CERN scientists.

The Large Hadron Collider is currently undergoing an upgrade and the next iteration of the system, which is scheduled to enter service in 2027, and is expected to produce 10 times more collisions than the current device. The volume of data generated only goes in one direction – and it won’t be long before traditional processors can no longer handle it all.

Source : ZDNet.com

“Certified gamer. Problem solver. Internet enthusiast. Twitter scholar. Infuriatingly humble alcohol geek. Tv guru.”